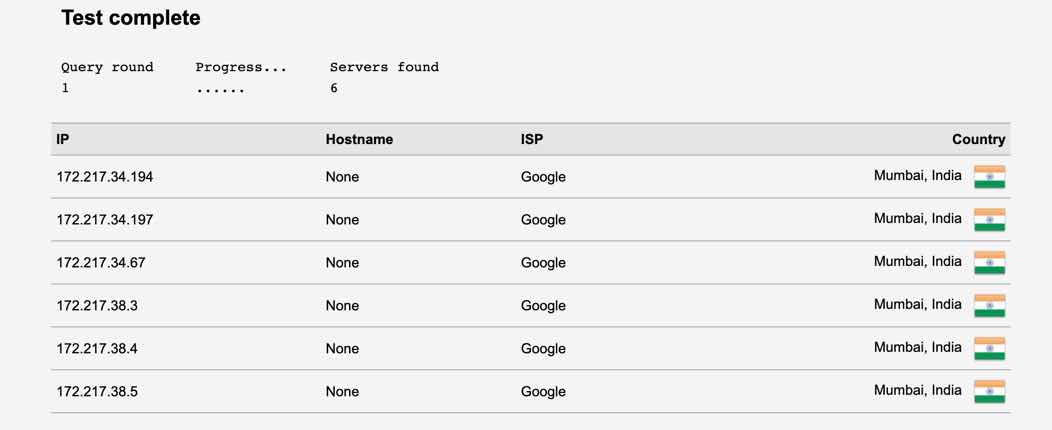

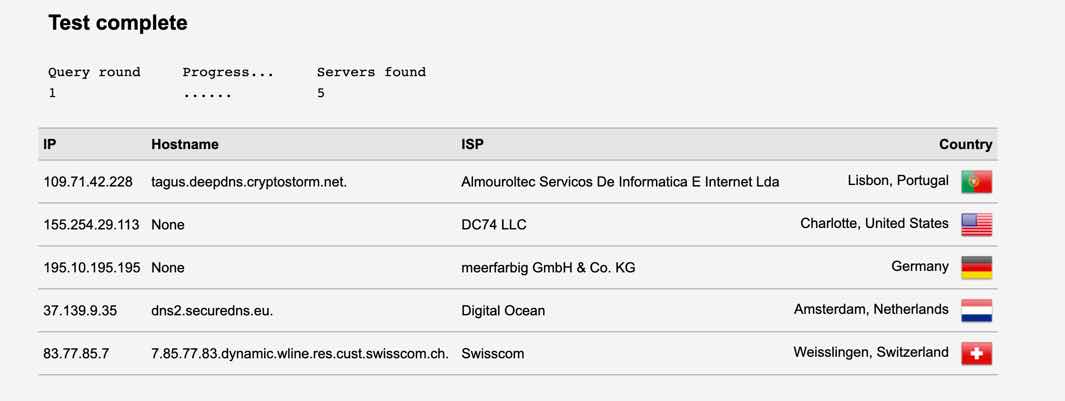

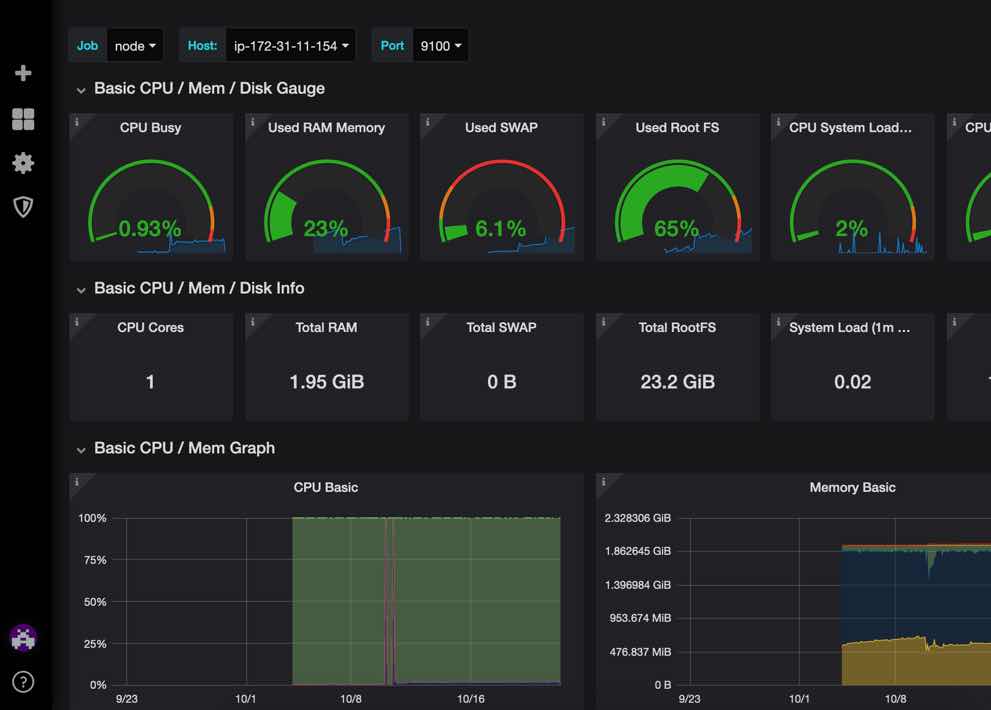

I had setup Pi-hole, a remote one, DNScrypt, both local and remote, privoxy for cleaning up the bad web traffic that passes through pi-hole etc. Things were looking good and that’s when EFF came up with their new https://panopticlick.eff.org/

After all the effort, the Panopticlick reports are not shining with colors. This gives and idea about the extend to which tracking is prevalent.

Useful links & screen shots:

https://github.com/pi-hole/pi-hole/wiki/DNSCrypt-2.0

https://github.com/pi-hole/pi-hole/wiki/DNSCrypt-2.0

https://itchy.nl/raspberry-pi-3-with-openvpn-pihole-dnscrypt

root@ubuntu-s-1vcpu-1gb-xyz-01:~# apt install dnscrypt-proxy

no privacy – as logs are there !